- Products

- Data storage system

- Huawei Technologies Co., Ltd

Data storage system OceanStor A800

Add to favorites

Compare this product

Description

Large AI model parameters have grown exponentially in scale, from the hundreds of billions to trillions. Training sets have also evolved from unimodal to multi-modal (images, videos, etc.) paradigms, resulting in a data explosion that has increased volumes by thousands, and brought unprecedented challenges.

First, poor loading performance among massive numbers of small files has undermined training efficiency.

Second, large model parameters are frequently being optimized, which has led to unstable training platforms, with service interruptions occurring every two days on average, and frequent, time-consuming read and write of TB-level checkpoints.

Third, training 100-billion-parameter models requires massive GPU resources, since individual GPUs are not efficient enough, and ensuring millisecond-level real-time inference for conversational applications is quite challenging.

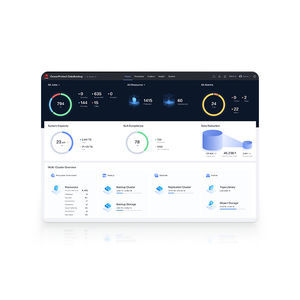

OceanStor A800 delivers a staggering 24 million IOPS and 500 GB/s bandwidth within a single controller enclosure, serving as the high-performance AI knowledge repository storage solution. With four times the performance of the next runner-up, a single storage system is all you need for small file loading of training sets and high-bandwidth-based resumable training. You'll also benefit from access to a leading intrinsic vector knowledge repository, which supports large model inference applications with over 250,000+ QPS, achieving accelerated vector retrieval and millisecond-level inference response.

Ultra-high performance

Our groundbreaking data-control separation architecture facilitates the direct flow of data to disks, reducing CPU usage and delivering 24 million IOPS per controller enclosure.

Catalogs

No catalogs are available for this product.

See all of Huawei Technologies Co., Ltd‘s catalogsOther Huawei Technologies Co., Ltd products

Data Storage

*Prices are pre-tax. They exclude delivery charges and customs duties and do not include additional charges for installation or activation options. Prices are indicative only and may vary by country, with changes to the cost of raw materials and exchange rates.